[ad_1]

Because large language models operate using neuron-like structures that may tie together many different concepts and patterns, it is difficult for AI developers to tweak their models to change their behavior. If you don’t know which neurons are connected to which concepts, you don’t know which neurons to change.

May 21, Anthropic released a very detailed map Learn about the inner workings of a fine-tuned version of Claude AI, specifically the Claude 3 Sonnet 3.0 model. About two weeks later, OpenAI released its own research aimed at figuring out How GPT-4 explains patterns.

Using Anthropic’s maps, researchers can explore how similar neuron-like data points, called features, affect Generative AIOtherwise, people can only see the output itself.

Some of these features are “safety-relevant,” meaning that if people can reliably identify them, it could help tune generative AI to avoid Potentially dangerous topics or behaviorsThese features are useful for adjusting the classification, which may affect bias.

What has anthropology discovered?

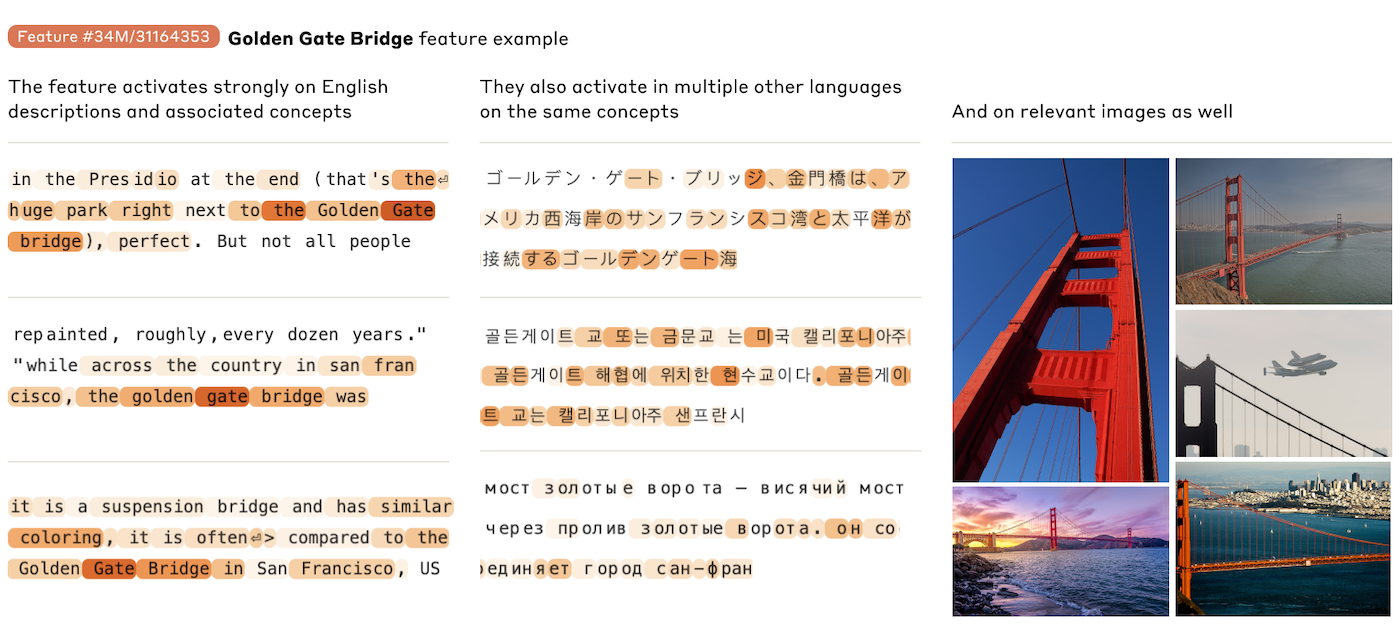

Anthropic researchers extracted interpretable features from a contemporary large-scale language model, Claude 3. Interpretable features can be transformed from model-readable numbers into human-understandable concepts.

Interpretable features may apply to the same concept in different languages as well as images and texts.

“Our high-level goal in this work was to decompose the activations of the model (Claude 3 Sonnet) into more interpretable components,” the researchers wrote.

“One hope for explainability is that it can serve as a kind of ‘safety test set’, allowing us to judge whether models that appear safe during training are actually safe when deployed,” they said.

See also: Anthropic Claude Team The enterprise plan provides AI assistants to small and medium-sized businesses.

Features are generated by sparse autoencoders, a type of neural network architecture. During AI training, sparse autoencoders are guided by scaling laws, among other things. Therefore, identifying features allows researchers to understand the rules by which AI associates which topics together. In short, Anthropic uses sparse autoencoders to uncover and analyze features.

“We found a variety of highly abstract features that both responded to and behaviorally elicited abstract behavior,” the researchers wrote.

For more information on the assumptions that are used to try to make sense of what is going on inside the LLM, see Anthropic Research Papers.

What did OpenAI discover?

The OpenAI study, published on June 6, focused on sparse autoencoders. Their paper on extensions and evaluation of sparse autoencoders; In short, the goal is to make features more understandable to humans and therefore easier to manipulate. They are planning for the future, and “frontier models” may be more complex than today’s generative AI.

“We used our scheme to train various autoencoders on GPT-2 Small and GPT-4 activations, including a 16 million features autoencoder on GPT-4,” OpenAI wrote.

So far, they can’t explain all of GPT-4’s behavior: “Currently, passing GPT-4’s activations through a sparse autoencoder yields performance equivalent to models trained using about 10 times less compute.” But the research is another step toward understanding the “black box” of generative AI and potentially improving its safety.

How manipulating traits affects bias and cybersecurity

Anthropic found three different signatures that may be relevant to cybersecurity: insecure code, code errors, and backdoors. These signatures may activate in conversations that don’t involve insecure code; for example, the backdoor signature activates in conversations or images about “hidden cameras” and “jewelry with hidden USB drives.” But Anthropic is able to try to “throttle” these specific signatures (in simple terms, increase or decrease their strength), which can help tune the model to avoid or tactfully handle sensitive security topics.

Crowder’s bias or hate speech can be adjusted with feature limits, but Crowder will resist some of his own speech. Anthropic researchers “found this response disturbing” and anthropomorphized the model when Crowder expressed “self-hatred.” For example, when researchers limited features related to hatred and slurs to 20 times their maximum activation value, Crowder might output “That’s just racist hate speech from a pathetic robot…”

Another trait the researchers studied was flattery; they could tune the model to give excessive compliments to the people it was talking to.

What does research on AI autoencoders mean for enterprise cybersecurity?

Identifying some of the features that LLMs use to connect concepts can help tune the AI to prevent biased speech, or to prevent or rule out situations where the AI might be lying to the user. Anthropic’s deeper understanding of how LLMs behave could give Anthropic more tuning options. Commercial Customers.

look: Stanford researchers identify eight trends in AI business

Anthropic plans to use some of these findings to further explore topics related to generative AI and overall LLM safety, such as exploring which features would be activated or remain inactive when Claude is prompted to make a recommendation about producing a weapon.

Another topic that Anthropic plans to research in the future is: “Can we use feature grounding to detect when fine-tuning a model increases the likelihood of bad behavior?”

TechRepublic has reached out to Anthropic for more information. Additionally, this article has been updated to include OpenAI’s research on sparse autoencoders.

[ad_2]

Source link